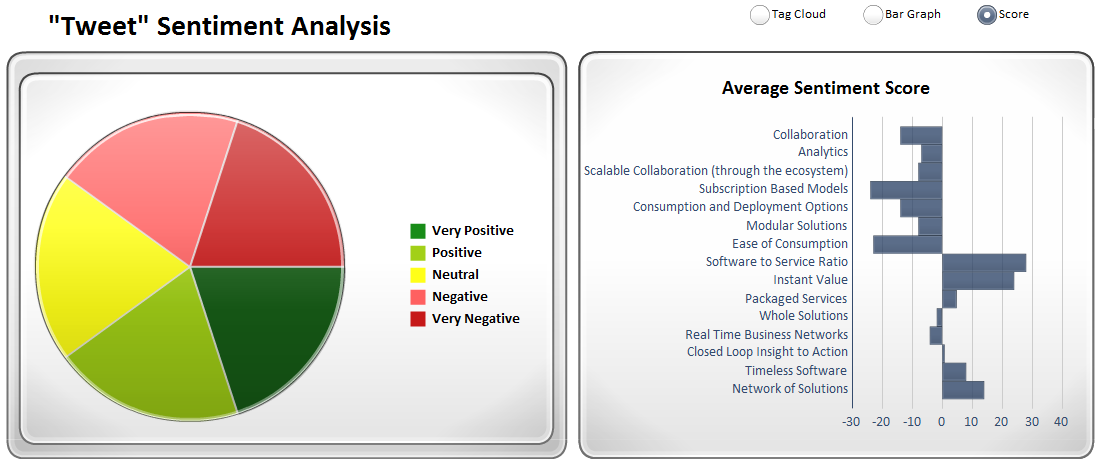

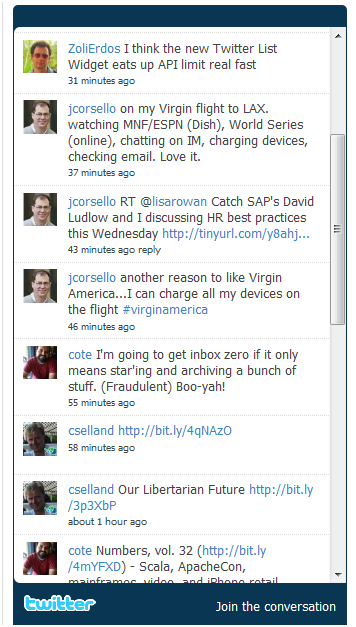

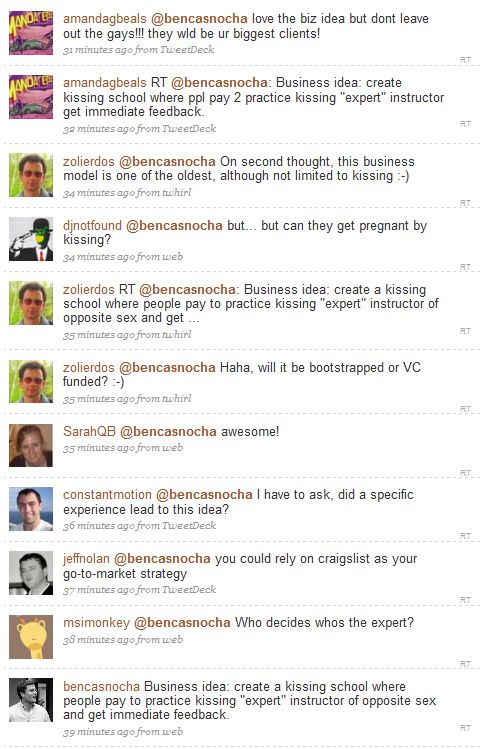

In the last minute I had to cancel my trip to the SAP Influencer Summit, but I am following it almost as if I was there – by following the Tweet Stream. SAP has also provided a Virtual Environment, where analysts, media, bloggers can interactively participate – right now I am watching a live video on their On Demand Strategy (hm.. how appropriate – watching the On-Demand session on-demand). The Virtual Environment includes Twitter tools, including sentiment analysis based on SAP’s Business Objects technology:

(Cross-posted @ CloudAve )

Recent Comments